por

John R. Fischer, Senior Reporter | August 02, 2019

Utilizing an effective AI system

could decrease reading time

for DBT interpretations, says

study.

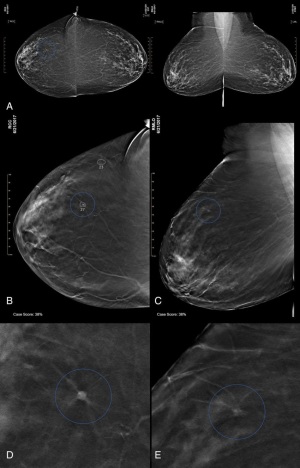

Users of digital breast tomosynthesis could appreciate shorter reading times with the addition of AI, according to a new study.

Capable of improving cancer detection and reducing false-positive recalls compared to digital mammography alone, DBT may take almost twice as long to interpret due to the time needed to scroll through all its

images.

Ad Statistics

Times Displayed: 2130

Times Visited: 4 Stay up to date with the latest training to fix, troubleshoot, and maintain your critical care devices. GE HealthCare offers multiple training formats to empower teams and expand knowledge, saving you time and money.

“Tomosynthesis is certainly becoming the new standard of care, the new better mammogram. But it does take longer to read than conventional 2D mammography," study lead author Dr. Emily Conant, professor and chief of breast imaging from the department of radiology at the Perelman School of Medicine at the University of Pennsylvania, told HCB News. "Most of us see the longer reading time as a trade-off for the improved accuracy. We’re okay with the extra time since we have fewer false positive call-backs and, we find more cancers.But,scrolling through the DBT stack definitely take more time and in a busy practice, efficiency is incredibly important.”

To do this, Conant and her team developed a deep learning system to mine vast amounts of data and identify subtle patterns beyond human recognition that could be used to train the AI system to detect suspicious findings in DBT images.

They then tested its performance against that of 24 radiologists, including 13 breast subspecialists, in reading 260 DBT exams, which included 65 cancer cases. Each read the exams with and without AI assistance.

Findings made using AI showed improved accuracy and shorter reading times, with sensitivity increased from 77 percent without it to 85 percent with it. Specificity also increased from 62.7 to 69.6 percent, while recall rate for non-cancers, or the rate at which women were called in for follow-up exams based on benign findings, dropped from 38 to 30.9 percent. Reading time on average decreased from over 64 seconds without AI to 30.4 with it, and radiologist performance – measured by mean AUC – went up from 0.795 to 0.852.

“The findings in this reader study that the accuracy improved and the reading times decreased is really important," said Conant. "Anything that provides more accurate interpretation is better for patients. The impact of improved efficiency is really appreciated at the reader and practice levels. Also, if we become more efficient at reading some cases, we may then be able to spend additional time on the more complex cases further improving accuracy on those. It will be interesting to see how AI impacts not only the accuracy and efficiency of interpreting an entire workload in a real-world, clinical setting, but also specifically,what the impact is on the more complex cases.”

The approach is expected to improve with greater exposure to larger data sets, enhancing the potential impact it could have on patient care. Further testing in clinics will be required. Some analyses are currently underway, looking at the specific readers and their experiences.

The findings were published in the journal,

Radiology: Artificial Intelligence.